Md Iftekher Hossain

Md Iftekher Hossain

| Doctoral Researcher @ Cognitive Robotics Group, Tampere University | ✉️ iftekher.hossain21@gmail.com |

👋 About Me

I am a Doctoral Researcher in Robotics focusing on generalist robotic policy learning with spatial understanding. My research aims to develop data-efficient learning methods that enable robotic systems to generalize across tasks and environments, with a strong emphasis on real-world industrial deployment. I am currently a researcher in the PERFORM project within the Cognitive Robotics Group at Tampere University. My journey in intelligent robotic manipulation began during my Master’s thesis at the Intelligent Robotics Group, Aalto University, where I worked on zero-shot robotic policy learning using large language and vision–language models. This experience shaped my research focus on data-efficient, generalizable robotic systems for real-world deployment.

💼 Professional Experience

Doctoral Researcher

Cognitive Robotics Group, Tampere University

September 2025 – Present

- Surveying state-of-the-art Vision–Language–Action (VLA) models to identify open research gaps.

- Implementing and benchmarking SOTA VLA algorithms for robotic manipulation and generalization.

Research Assistant (Summer Intern)

Intelligent Robotics Group, Aalto University

June 2025 – August 2025

- Systematically evaluated foundation-model-based zero-shot imitation learning across six task families.

- Performed sim-to-real transfer of zero-shot manipulation on a real Franka Emika Panda robot.

- Conducted ablation studies to improve video understanding and foundation-model-based manipulation performance.

- Implemented MoveIt Task Constructor with the Franka Emika Panda robot in simulation.

Master’s Thesis Worker

Intelligent Robotics Group, Aalto University

Thesis

January 2025 – May 2025

- Enhanced zero-shot trajectory generation using LLM-based reasoning.

- Introduced OAG and SOAG for transferring passive human demonstration knowledge to robotic execution in novel environments.

- Integrated audio transcription, improving OAG task recognition by 60%.

- Achieved 75% success on contact-rich manipulation tasks (pushing, pulling, reaching) over 12 execution phases.

- Developed a fully prompt-based imitation learning system requiring no robot-specific training data.

- Designed a framework for trajectory generation from passive video demonstrations using LLM reasoning and semantic abstraction.

- Leveraged vision–language models for zero-shot action sequence extraction and high-level planning.

- Implemented object detection, tracking, and semantic mapping in PyBullet using the Franka Emika Panda robot.

- Evaluated domain shift effects and devised robust task generalization techniques using SOAG abstraction.

- Awarded Grade: 5/5 (Excellent)

Data Scientist

SSL Wireless

November 2021 – August 2023

- Developed and deployed computer vision systems, including image segmentation for shop detection and size estimation, and object detection for variable-sized FMCG products.

- Integrated production systems using FastAPI and Docker, ensuring scalability and reliability.

- Improved national ID verification accuracy to 98.5%.

- Introduced a Mean Embeddings approach for multi-face recognition, increasing accuracy by 8% and reducing inference time by 5×.

- Built automated large-scale data scraping pipelines for social media analytics.

- Developed methods for shop image verification and automated invoice data parsing.

- Applied time-series forecasting to daily service usage data and implemented predictive strategies.

- Mentored junior team members and interns.

Research Assistant

Institute of Energy Technology (IET), CUET

March 2018 – April 2018

- Contributed to a research project on a Smart Water Meter Monitoring System using Python, MQTT, microcontrollers, sensors, and Raspberry Pi.

Projects

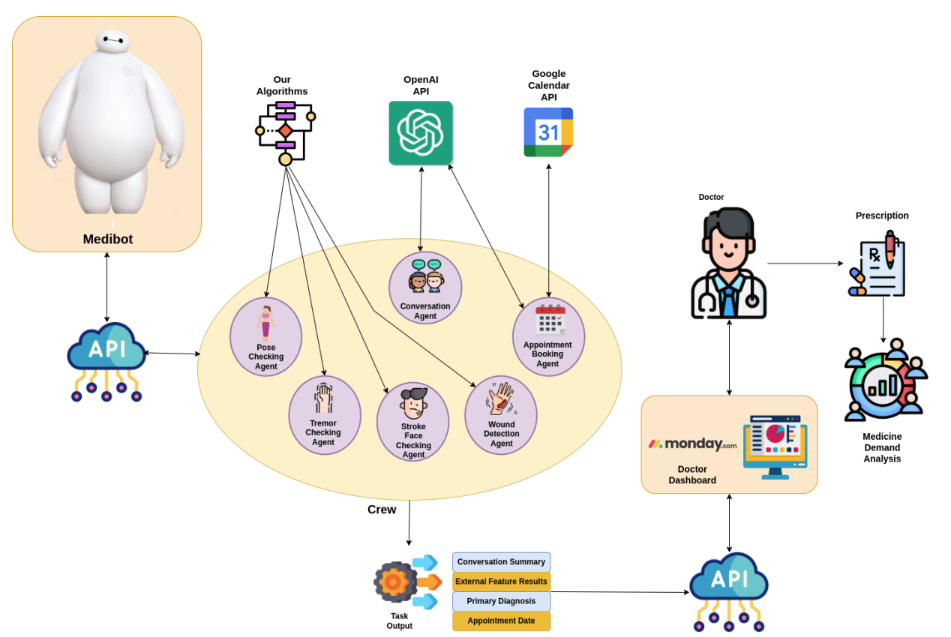

MediBot - An Interactive General Practitioner Robot

Developed a simulated robot based on ROS2 Humble (GitHub) with advanced robotics and computer vision capabilities. The system uses a modular, multi-agent design, where each agent performs a specific task:

- GP Interaction using LLM

- Pose analysis

- Tremor analysis

- Stroke face detection using computer vision

It also includes AI-driven appointment booking and remote doctor consultations, enhancing patient care.

Architecture:

Video Demo:

Video Demo

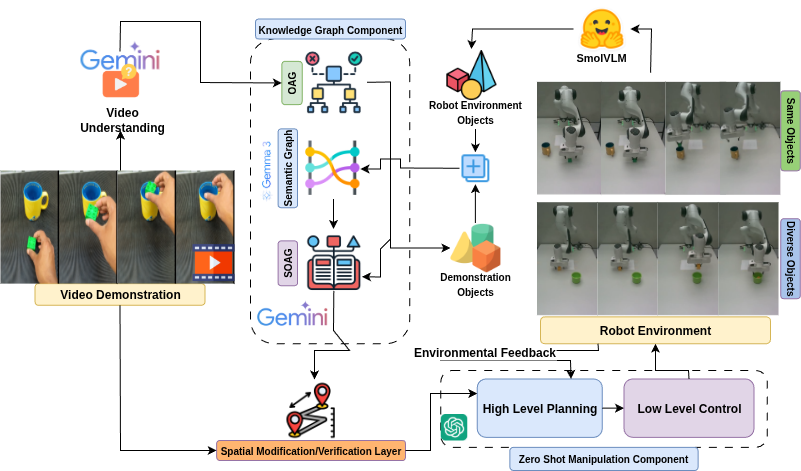

SOAG - Video-to-Robot Knowledge Transfer for Zero-Shot Manipulation

Developed SOAG (Spatially-Organized Abstraction for Generalization), a framework for transferring video demonstration knowledge to robot manipulation in a zero-shot manner using foundational models. Evaluated across six task families, including simple and contact-rich complex tasks.

The system incorporates:

- Prompt Engineering for LLM-Based Task & Motion Planning to guide robot behavior.

- Decision-Override Tools to process and refine LLM outputs for safer, intentional motions.

- OAG Video Abstraction for effective task demonstration encoding.

- Spatial Modification Layer to improve abstraction accuracy (~15% increase).

Results:

- 32 video demonstrations → 23 correct video abstractions.

- Of 23 successful abstractions → 20 executions succeeded with same objects, 21 with diverse objects.

- Achieved ~70% overall task execution accuracy, showing strong knowledge transfer across object variations.

Architecture:

Video Demo:

Video Demo-Demonstration

Video Demo-Execution

🚀 Skills

- Programming: Python, C/C++, Javascript, Git, Scripting (Bash), LaTeX, HTML, Vim

- Operating System: Linux, Windows

- Technologies: Computer Vision, Machine Learning, Deep Learning, Natural Language Processing, Cloud Computing, MLOPS

- Frameworks: Tensorflow, Pytorch, Keras, OpenCV, Fastapi, Numpy, Pandas, Matplotlib, Seaborn, Selenium, Hugging Face

📈 Skill Assesments

- Machine Learning, in top 30% among 188K participants.

- Python (Programming Language), in top 30% among 1 million participants

- Problem Solving (Basic), HackerRank Skill Certifications

- Python (Basic), HackerRank Skill Certifications

🌟 Achievements

- CTO Appreciation Award, CTO Appreciation Award Giving Ceremony, SSL Wireless, Dec 2022.

- High Flyer Hero, Mid Year Performance Award, SSL Wireless, Sep 2022.

- Winner, Idea Contest, Esho Robot Banai, Aug 2017.

- Runner-Up, Soccerbot, NSU Bit Arena, Jan 2019

📚 Publications

- “Fabrication of Smart Eye Controlled Wheelchair for Disabled Person”, Md. Anisur Rahman, Md. Abdur Rahman, Md. Imteaz Ahmed, and Md. Iftekher Hossain, International Conference on Big Data, IoT and Machine Learning (BIM 2021)

- “A Deep Learning Approach to Count people Using Facenet Architecture”, Md. Iftekher Hossain, Md. Sakirul Alam, International Conference on Emerging Trends in Industry 4.0 (2021 ETI 4.0), Raigarh, Chhattisgarh, India

- “An Efficient Way to Recognize Faces Using Mean Embeddings”, Md. Iftekher Hossain, Sama‑E‑Shan and Homayun Kabir, IEEE First International Conference on Advances in Electrical, Computing, Communications and Sustainable Technologies (IEEE ICAECT 2021)

- “An Approach to Maximize The Integrated Safety System For Two Wheelers”, Md. Abdullah Al Mamun, Bably Das, Sama‑E‑Shan, and Md. Iftekher Hossain | Conference Name 2

- “Low Cost Deaf Communication System”, Md. Iftekher Hossain, Marzan Alam, and Md Rakibul Islam Prince | 1st National Conference On Energy Technology and Industrial Automation (NCETIA 2018)

📜 Certificates

- Machine Learning

- Mathematics for Machine Learning: Linear Algebra

- Mathematics for Machine Learning: Multivariate Calculus

- Deep Learning Specialization

- Neural Networks and Deep Learning

- Improving Deep Neural Networks: Hyperparameter Tuning, Regularization and Optimization

- Structuring Machine Learning Projects

- Convolutional Neural Network

- Sequence Models

- Algorithmic Toolbox

- Advanced Deployment Scenarios with TensorFlow

- Deploy Models with TensorFlow Serving and Flask

- Version Control with Git

- Python Classes and Inheritance